Orachrome Lighty is a great tool for monitoring our oracle databases even in case we do not have the diagnostic packs license or in case we are using the standard edition. It really on statspack and L-ASH for collecting the needed information.

This blog post is not about the different feature offered by the tool or how to use, there are already many articles covering that. I will focus on the overhead I noticed when trying this great tool and the different solution that can be implemented to alleviate them.

After enabling monitoring for different database version (11.2.0.4/12.2.0.1/19C) (Rac/Standalone) I noticed that the “LIGHTY_PKG.SNAP» which is scheduled to run every 10 second by default is taking some time to execute (Between 1 and 3 second) in database with big shared pool , a lot of no shared SQL and huge sql plans . This may not be a problem for single database server, but for highly consolidated database environment where the CPU is over-provisioned (such in R&D environment where we may have a lot of IDLE instances) this can become problematic.

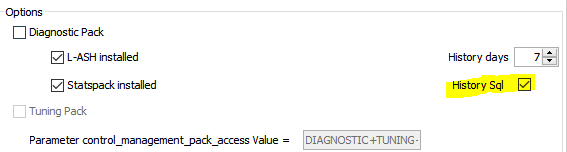

The slowdown is related to the capture of the SQL history, if we try to disable it the response time will be much much better.

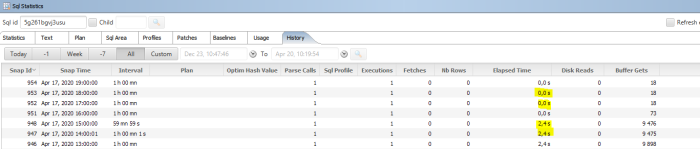

It take 2.4 second to do a single snapshot in average with the sql history enabled !

Using statspack report we can easy identify the slow query :

And here is the plsql code responsible for maintaining the SQL History:

So, let us take a closer look at the last statement, which is the slowest one:

So basically we are getting all the information from both tables and then throwing must of the data away (The data already inserted in l_ash_sql_plan table) . If we rewrite the query to get an anti-join :

It’s better but still the access to the FIXED_TABLE “X$KQLFXPL” is slow. To reduce the access to the fixed table only for needed information I rewritten the query this way :

That’s much better!

I have applied the same strategy to the remaining code, and here is the new sql history plsql code :

After deploying the new code, it take now only 0.4 second on average for taking the snapshot which is great!

To reduce the overhead much more we can take a snap of the sql history at much less frequency than the active session history such as every 1 minute for exemple. This is very well handled in the good old LAB128. I added a little hack in current code to do that:

The snap now take on average 0.1 second (remember it was 2.4 second!)

That’s it 🙂 we can know have the data we need with much lesser overhead!

Hello Mr. Mahmoud Hatem,

thank you for your great contribution. Our team decided to include it in the next version of Lighty.

Regards,

Light teams

Hi,

I am glad that i could help, That’s great , Thanks 🙂